By: Dana Sackett

While the title of this article may bring to mind runway models in lab coats, this week I want to discuss those ‘super’ models that scientists use to describe, understand, and predict the world around us. These types of models allow us to better understand why an ecosystem is the way it is and anticipate what will happen in a given ecosystem or region under different circumstances. This can help us make choices that will either preserve or change the system or take action ourselves (for example, evacuating if a model shows that a hurricane is likely to hit your hometown).

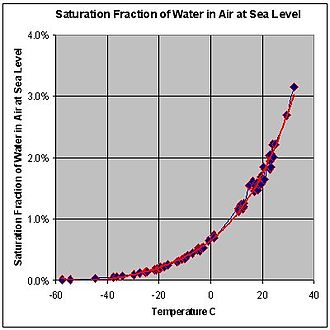

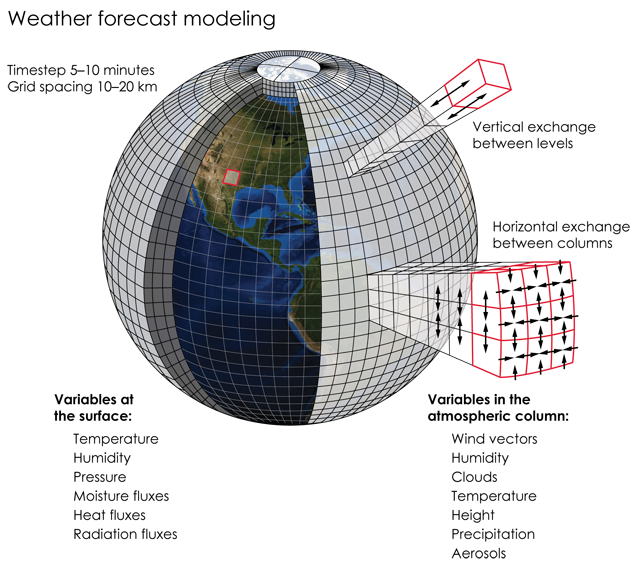

The first step scientists take in creating these types of ‘super’ models is to describe the spatial area and time frame they are interested in (a lake over a few months or the whole earth over centuries). The next, is to begin to define that system based on the smaller pieces that make-up the whole system; such as how much water the air can hold at different temperatures, how many eggs a fish of a certain size and species can produce, or the physical laws of how fluids move. However, it is important to realize that any model is only an approximation of the real system; because the natural world is so complex we could never describe it perfectly with a model. It therefore takes a lot of little pieces of known information about the natural world to create a model that can represent even relatively simple ecosystems.

These smaller pieces of the larger ecosystem are often called functional relationships (when changing something systematically changes something else) or laws and can be described using mathematical equations. These equations are turned into computer code that relate all of these smaller parts to each other. For those bits of information that are missing and needed scientists can create an experiment to estimate the missing information, allow the model to approximate the missing information within a reasonable range of possibilities, or estimate that information themselves using the best data available. They can even incorporate those factors that are known to fluctuate randomly. It is the combination of all of these little pieces of information that make-up these ‘super’ models.

After a model has been created, the next step is to determine how good the model is at representing the system it is meant to describe. The most common way to do this is to have the model predict something where data has already been collected and compare the model results to the actual data. If there is a close match between what the model says and the observed data then the model is considered useful. Once there is a match scientists can add or take out different factors to determine how much of an impact those factors have had on the current system. A famous example of this was presented by Hegerl and others in 2007 when they demonstrated that only when human forces were included in climate models with natural forces did the models match measured temperature data and when those human forces were excluded the results no longer matched the measured temperature data over the last several decades.

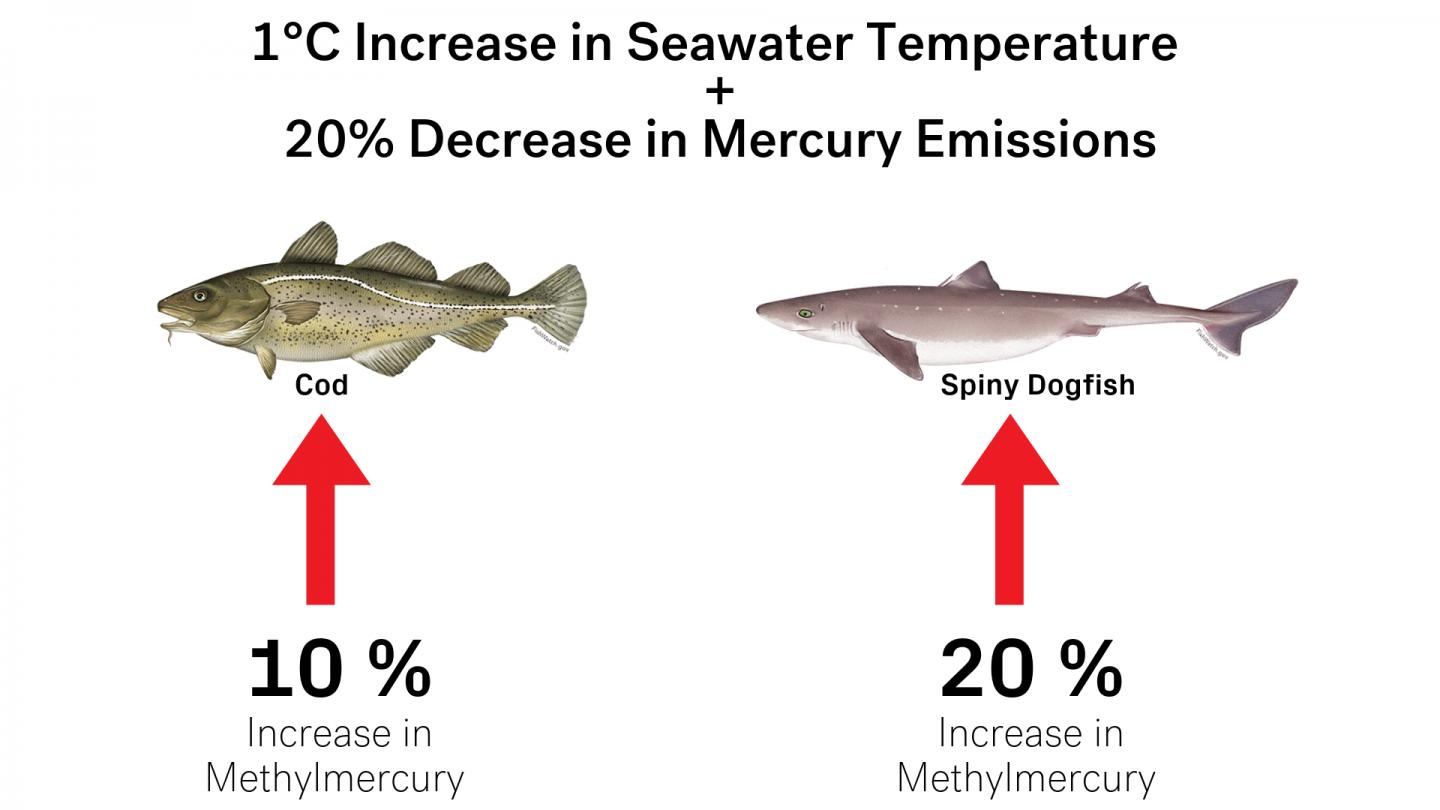

This is also an example of how you can ask a model “what if” questions, like “what would happen to the mercury levels in marine predators if herring were overfished in the Gulf of Maine?” Now this may seem like a pretty specific “what if” question but it is one that scientists were recently able to answer using a model. It turned out that the overfishing of herring in the 1970’s caused one fish species to have lower mercury because they replaced the herring in their diet with lower mercury prey but caused another fish to accumulate more mercury from changing their diet to higher mercury prey. However, diet is not the only thing that can affect mercury levels in fish. Increases in water temperature can increase the metabolic rate of fish causing them to eat more and accumulate more mercury. So, these researchers modeled numerous scenarios on how prey availability, rising sea temperatures, mercury emissions, and overfishing impacted the levels of mercury in fish. Some of their finding included that:

– Also, that a 1oC increase in seawater temperature and a collapse in the herring population would result in a 10% decrease in mercury levels in cod and a 70% increase in spiny dogfish.

– And that a 20% decrease in emissions, with no change in seawater temperatures, would decrease mercury levels in both cod and spiny dogfish by 20%. Source

While models are extremely important in helping us gain understanding, there are some major limitations. Most importantly, it is necessary to recognize that all models are inherently wrong. They may get close to representing a system, but there is no way to include all of the complexity of nature over time and space into a model. Further, the more complex the model the more computer power and time is needed to run those models. For instance, many climate and weather models use a supercomputer that could fill a warehouse. As for time, even a relatively simple simulation model that I have used to estimate how many fish need to be tagged to get a reliable estimate of fishing harvest can take hours to run. Even so, models are our best way to determine how our choices are likely to impact the future.

I also want to make clear that I barely scratched the surface of all the different types of models currently out there; and with scientist’s continuing to create new and improved models to obtain better and more accurate results each year, there will be many more to come.

References and more interesting material:

Börner K, Boyack KW, Milojević S, Morris S. 2012. An Introduction to Modeling Science: Basic Model Types, Key Definitions, and a General Framework for the Comparison of Process Models. In: Scharnhorst A, Börner K, van den Besselaar P (eds) Models of Science Dynamics. Understanding Complex Systems. Springer, Berlin, Heidelberg.

Canham CD, Cole JJ, Lauenroth WK. 2003. Models in Ecosystem Science. Princeton University Press Nature p. 476.

Hegerl GC, Crowley TJ, Allen M, Hyde WT, Pollack HN, Smerdon J, Zorita E. 2007. Detection of human influence on a new, validated 1500-year temperature reconstruction. Journal of Climate 20:650-666.

IPCC, 2007: Climate Change 2007: The Physical Science Basis. Contribution of Working Group I to the Fourth Assessment Report of the Intergovernmental Panel on Climate Change. Contributing Authors: Arblaster J, Brasseur G, Christensen JH, Denman K, Fahey DW, Forster P, Jansen E, Jones PD, Knutti R, Treut HL, Lemke P, Meehl G, Mote P, Randall D, Stone DA,

Trenberth KE, Willebrand J, Zwiers F. IPCC, Geneva, Switzerland, 21 pp.

https://www.climate.gov/maps-data/primer/climate-models

http://dx.doi.org/10.1038/s41586-019-1468-9

Below is an excellent Ted talk about how climate models work: